Object detection has become one of the most transformative technologies in computer vision, enabling machines to not only see, but also understand the visual data in front of them. What was once something only written about and seen in movies is now being used for a range of purposes across various industries. So, it’s more important than ever to know how object detection works.

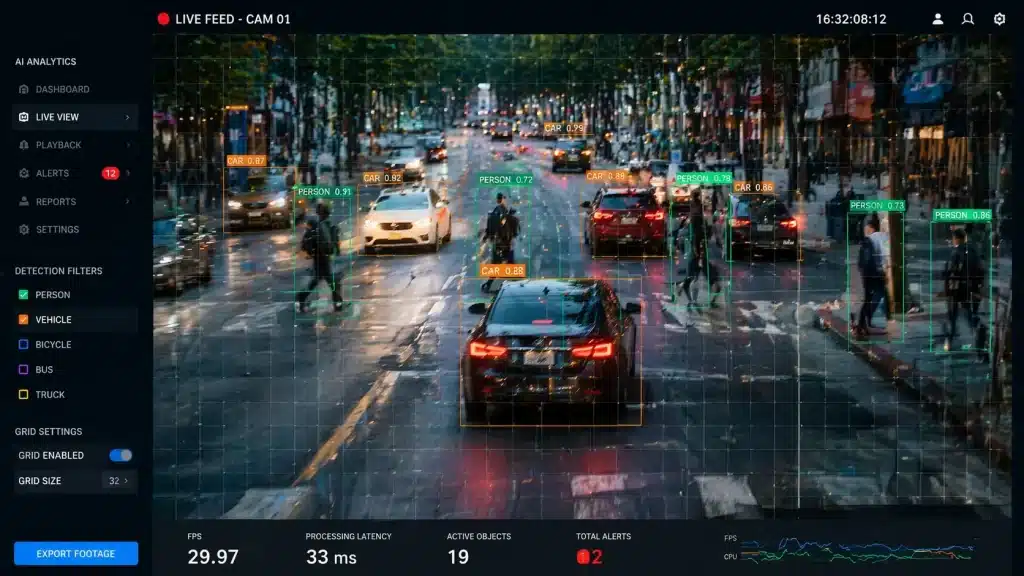

At its most basic level, object detection lets a computer find, identify, and sort objects in an image or video frame all at the same time. In contrast to simple image classification, which gives a label to the whole image, it looks to find exactly where and what each object is. This makes it valuable to use in everything from self-driving cars to smart surveillance systems.

This article breaks down how object detection works and how it’s being adopted across different industries, highlighting its promising use in security. We’ll also talk about why object detection is a more ethical choice than facial recognition for security purposes, which is a top concern for employers, advocates, parents, and others.

Key Insights

- Object detection is a computer vision task that labels different objects in an image or video frame at the same time. It can be programmed to look for specific items, depending on the use case.

- Object detection is used for a range of purposes. This includes weapons detection, retail loss prevention, health screening, and more.

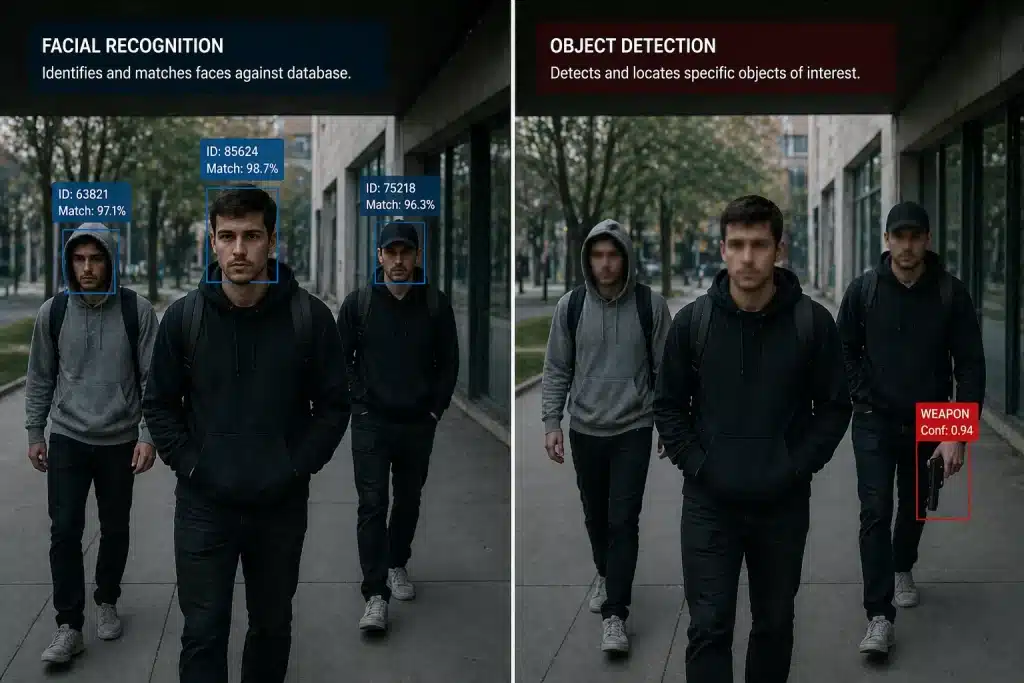

- In the security context, when facial recognition is not absolutely necessary, object detection can be a more privacy-conscious screening method for preventing violence that focuses solely on finding weapons.

What is Object Detection?

Object detection is a computer vision task that finds and identifies many objects in an image or video frame at the same time. Object detection doesn’t just answer “what’s in this picture.” It also answers “where” by drawing bounding boxes around each detected item, separating different instances of the same object in the scene, and giving each object a class label, like person, handgun, knife, backpack, or vehicle. Most detection systems have “confidence scores” or something similar that show how sure the model is about its prediction. For example, a high-confidence handgun detection with a score of 0.94 tells the security operator verifying it that the detection has a higher likelihood of accuracy.

Model-Centric vs. Data-Centric Models of Detection

Modern methods of object detection are typically shaped by either model-centric or data-centric approaches.

A model-centric approach focuses on developing and refining algorithms themselves. This means creating more sophisticated deep learning models and neural network architectures to improve how the system predicts objects’ locations.

On the other hand, a data-centric approach prioritizes the quality and relevance of the training data over the complexity of the model. It does this by collecting more representative datasets, improving labeling precision, and making sure that the imagery reflects real-world conditions with diverse lighting and environmental variations. By favoring quality over quantity, data-centric models can generalize better and operate more reliably in real environments while reducing potential bias.

In practice, effective object detection often relies on a balance of both approaches. Even the most sophisticated models can struggle when trained on unrepresentative data, whereas high-quality, real-world data can significantly enhance the performance of simpler systems. Consequently, organizations are increasingly investing in data integrity alongside model development to create more robust and proactive detection systems.

How Does Object Detection Differ from Other Computer Vision Methods?

It’s important to distinguish object detection from related computer vision techniques. For example, facial recognition technology (FRT) can scan and recognize faces by converting a person’s unique facial features into a digital profile called a “face embedding”. The profile gets compared against a database of known faces to find a matching profile. Image classification assigns a single label to an entire image without any localization. It might tell you “this is a photo containing a dog” but won’t be able to tell you where the dog is.

Instance segmentation, on the other hand, extends detection by delineating objects at the pixel level with masks rather than rectangular bounding boxes, providing more precise boundaries but typically requiring significantly more computational resources.

Object detection is the foundation for countless real-time systems operating today. Traffic cameras use it to count vehicles and detect accidents. Warehouse robots rely on it to avoid obstacles and identify packages. Perhaps most critically, AI-powered CCTV systems employ object detection to try to recognize objects that pose security threats, from a Glock-style handgun being carried into a building to a kitchen knife brandished in a threatening manner.

How Object Detection Works

To understand how object detection works, you need to follow the pipeline that turns raw pixels into useful information.

Getting the Image Ready

The system has to prepare the image before it can detect anything. They do this by resizing it to a standard size (making all images the same width and height) so the computer can handle them more easily. It might also adjust the colors and brightness to make the image easier to analyze. If it is a video, each frame is processed swiftly (10 to 30 frames per second, depending on the system) to keep up with real-time activity.

Finding Important Features

The core of an object detection system is a neural network, which is a type of computer program designed to recognize patterns. Early on, it looks for simple things like edges and shapes. As it goes deeper, it starts recognizing more complex features, like the outline of a handgun or the shape of a knife. Different types of networks help the system understand objects of different sizes and details.

Making Predictions

Once the system understands the features, it tries to determine where and what objects are. Some systems work in two steps: first suggesting possible areas where objects might be, then checking those areas closely. Others look at the whole image at once. They divide it into a grid and try to identify the objects in each section. For each object, the system draws a box and labels the object with whatever it thinks it is.

Cleaning Up the Results

Sometimes, detection systems will spot the same object multiple times and draw overlapping boxes on the image or frame. To fix this, many detectors will use a method called non-maximum suppression, which keeps only the most confident box and removes the duplicates.

An Example in Action

Imagine a camera in a school hallway. The system processes a video frame and detects three things: two people walking by and one handgun. It draws boxes around each person and the gun, with confidence scores showing how sure it is. The handgun detection triggers an alert so security can respond quickly.

Core Approaches and Algorithms in Object Detection

Throughout the 2010s and onward, different types of object detection methods have become popular, each with its own strengths and weaknesses. Some, like YOLO and SSD, focus on being fast enough to work in real time, while others, like Faster R-CNN, aim for higher accuracy even if they work a bit slower. Knowing the differences helps choose the best fit for each situation.

Two-Stage Detectors: The R-CNN Family

Two-stage detectors were among the first deep learning methods for object detection. The first R-CNN, which was developed around 2014, worked by first guessing where many objects might be and then carefully looking at each one to figure out what it was. This method was accurate but slow, taking up to 40–50 seconds to process one image.

Improvements came with Fast R-CNN in 2015, which sped things up by sharing work across the whole image, reducing processing time to about 0.3 seconds per image. Faster R-CNN in 2015 made it even faster by teaching the system to find objects on its own, which reduced processing even more with good accuracy. These methods are very precise, which makes them great for times when speed isn’t as important as accuracy.

One-Stage Detectors: YOLO and SSD

One-stage detectors changed the game by doing everything in one step, without the need for a separate proposal stage. YOLOv1 (2016) could process images at 45 frames per second, making it fast enough for real-time use. It divided images into grids and predicted objects and their locations all at once. SSD (2016) improved on this by looking at features at multiple scales, helping it find small objects better.

YOLO continued evolving and improving rapidly over the past decade. More recent models can handle more frames per second while still being very accurate. These fast models are used a lot in places where speed is very important, like security cameras and self-driving cars.

RetinaNet, developed in 2017, addressed a prevalent issue in one-stage detectors: they frequently perceive significantly more background regions than actual objects, potentially leading to system confusion. RetinaNet came up with a unique way to focus learning on objects that are harder to see, which made it more accurate without slowing it down.

Transformer-Based Detectors

More recently, transformer-based models like DETR (introduced in 2020) have taken a new approach. Instead of relying on predefined boxes or multiple steps, they look at the whole image and predict objects directly. Early versions were slower, but newer ones like Deformable DETR and DINO are much faster and more accurate, which makes them good candidates for future use.

Training Deep Learning Models

All these object detection methods learn by studying labeled images. Systems compare their guesses to the right answers during training and make changes to get better. This process takes a long time and a lot of computer power; it often runs hundreds of cycles (or “iterations”) on powerful computers. Combining several images into one training example is one way to help models learn better and become more accurate.

Object Detection in Various Industries

Object detection technology supports a lot of real-world systems, from transportation systems to smart city infrastructure.

Transportation and ADAS

Advanced driver assistance systems (ADAS) are a fairly widespread use of object detection technology available to the public. Over the past decade, car manufacturers have been building systems that can recognize cars, pedestrians, cyclists, lanes and traffic lights in real time, processing images at up to 30 frames per second. Some cars have cameras that can see all around them (surround view monitoring) and use that information to predict where other road users will go, which can potentially prevent accidents. To keep drivers safe, especially in semi-autonomous cars, these systems need to be able to track objects as they move, work in all kinds of weather, and respond fast.

Industrial Manufacturing and Quality Control

Manufacturing uses object detection to check product quality in ways that can be difficult for people to do consistently. For example:

- Assembly lines can use trained object detection models to find missing screws.

- Beverage companies use it to spot contaminants in bottles before shipping.

- Systems that find and count pallets in warehouses can potentially reduce inventory errors.

These examples show that machine learning can make repetitive inspection tasks easier.

Healthcare and Medical Imaging

Object detection in medical imaging can potentially help healthcare workers save lives. For example, there are systems that analyze CT scans and can find lung nodules with high accuracy, possibly even things that doctors miss. AI mammogram tools have been shown to locate tumors, helping with early detection. For colonoscopies, real-time detection systems can spot polyps with mid-90% accuracy in some studies. These don’t replace doctors; instead, they help doctors diagnose faster, which gives them more time to work on cases and spend face-to-face time with patients.

Retail and Smart Stores

Retail has adopted object detection to streamline and speed up operations. As a part of the (now inactive) Amazon Go stores’ complex detection and no-checkout system, cameras on the ceiling tracked what customers picked up and put in their bags to make shopping easier. In other stores, systems that monitor shelves and can spot when products are out of stock or misplaced can help stores reduce lost sales. When paired with video analytics, this can be an especially useful loss prevention system before customers leave the store.

Aerial and Environmental Monitoring

Drones and satellites can help with jobs that used to take a lot of work using object detection:

- Construction Equipment Management: Many construction sites now use object detection systems to track equipment.

- Parking Lot Occupancy: Parking lot monitoring systems can tell with high accuracy which spots are full.

- Wildfire Hotspot Identification: Object detection can be used to help firefighters find hotspots for wildfires quickly, saving them time and hopefully minimizing damage.

- Illegal Logging Prevention: To protect forests, object detection can be used to find illegal logging activities.

Security-Focused Object Detection: Weapons and Threat Detection

Over the last few years, there’s been a big rise in object detection that focuses on security, especially for weapons and threat detection. These systems scan CCTV or body-worn camera feeds for handguns, rifles, knives, and other threat-related objects in real time.

Traditional security measures rely on human operators monitoring multiple screens, which can quickly become tiring and lead to missed threats. AI-powered weapons detection can help support operators by performing object classification continuously without getting fatigued. This allows security teams to respond proactively rather than reactively.

How AI Gun Detection Works in Practice

For AI gun detection and other visual weapons detection systems to work in the real world, cameras need to send video to an inference engine, which can be either on-premise servers or a secure cloud environment. The object detection model scans each frame, looking for objects that fit trained threat categories. It does this by analyzing the same image several times a second. In many systems, if the system is confident that it’s found a weapon, the detection gets sent to a trained human verifier to confirm or dismiss the alert and act accordingly.

Modern systems can handle 4K streams at 30 frames per second, with detection providers stating that it takes a fraction of a second for a detection to be made when a weapon becomes visible.

Deployment Environments

Visual AI weapon detection deployments span diverse environments, including:

- K–12 Schools, Colleges, and Universities

- Hospitals and Healthcare Facilities

- Stadiums and Entertainment Venues

- Transit Hubs

- Government Buildings

- Corporate Offices

It can be deployed in a wider range of spaces than traditional weapons screening methods, like metal detectors. In almost any location where there are cameras set up, including parking garages, sports fields, stairwells, and loading docks, visual AI weapons detection can be used.

Integration with Security Infrastructure

It’s important to mention that AI weapon detection doesn’t replace existing security systems; it works with them. Many modern systems can connect to video management systems (VMS) so that all of the cameras can be watched from one place. Detected events can be linked to access control logs for comprehensive incident tracking. For serious threats, automated workflows can initiate 911 and emergency response dispatch while simultaneously alerting on-site security.

Critically, AI can be used to help bring events to light, but humans still hold the power to make decisions. With most gun detection systems, when a weapon is found, a human must review the alert and respond; they never take action on their own. This human-in-the-loop approach ensures accountability and reduces risks from false positives.

Working Through Challenges with Object Detection

Object detection has come a long way, but it can still have technical and practical challenges to overcome, especially in high-stakes security situations where mistakes can be catastrophic.

Technical Challenges

Even the best object detectors can struggle in poor deployment conditions:

- Small or overlapping objects: Detecting small objects like a concealed handgun that’s less than 10% visible can drop mAP (mean Average Precision)

- Cluttered environments: Dense crowds at stadium entrances can potentially reduce precision

- Adverse lighting: Standard cameras can’t detect in poorly lit nighttime scenes as well as in daylight

- Motion blur: Fast camera movement or rapid subject motion can impact detection

- Occlusion: Partially hidden objects challenge current approaches.

Object detection systems can only find objects in a camera’s view, so maximum coverage is key.

Data and Deployment Issues

To be effective, training data needs to represent how diverse the real world is. Weapons differ by region (rural vs. urban), clothing coverage changes with the season and culture, and lighting conditions range from bright sunlight to dark hallways. Models trained on synthetic datasets may struggle in non-standard situations.

Configuration and Tuning

Careful configuration is needed for effective deployment. Things to consider:

- When possible, object detection should run on all cameras across the campus for full coverage. If budget constraints object, detection should run on cameras based on priority and need.

- Camera quality matters. Cameras using visual AI weapons detection should be in good lighting, mounted and angled to maximize the field of view, and have enough pixel density.

- Based on the risks at each location, security teams set up target classes like “handgun” and “rifle.”

- Setting minimum confidence thresholds helps to balance detection sensitivity against false positive rates.

- Alert escalation workflows should integrate with mobile apps for immediate notification, video management systems for footage review, and access control triggers for automatic lockdown procedures.

Accuracy and Safety Trade-offs

To combat errors and maximize the effectiveness of AI gun detection, you can try:

- Fine-tuning on diverse datasets with weapon images from different angles and lighting conditions

- Focal loss implementation to reduce class imbalance errors

- Multi-camera views of the same scene

- Temporal consistency checks tracking objects across frames

- Human-in-the-loop review before serious interventions

The high stakes of object detection being used in security applications demand careful attention to both false negatives (missed weapons) and false positives (like phones or umbrellas mistaken for guns).

The Ethics of Object Detection in Security

Privacy has always been at the top of the conversation in security tech. As AI enters the security space, there have been concerns about how it’s used, by whom and why. For many people, when they think of “AI security,” they think of facial recognition, profiling and fully autonomous AI decision making.

Object detection and facial recognition both analyze video feeds, but they differ fundamentally in what they identify. Object detection focuses on ‘things’ (“handgun in frame”) while facial recognition identifies people (“this is John Doe”). This has major implications for privacy, bias, and civil liberties.

The Object Detection Approach to Privacy

Object detection offers significant privacy benefits over other technologies, like facial recognition:

- No biometric data collection: Object detectors don’t extract or store facial templates.

- No identity tracking: Systems don’t know who individuals are or follow them across cameras.

- No watchlists: There’s no database of “persons of interest” to match against.

- Transient processing: Frames are typically analyzed and discarded without building individual profiles.

Object detection is focused on objects, not identities.

For example, in a warehouse, the system will only look for boxes and palettes, not employees. In an airport, a system may be trained to look for abandoned bags with no owners in sight, not travelers. In the case of weapons detection, which may be used in schools, government buildings and beyond, the system cares only if dangerous objects appear in frame. Anyone walking past the camera remains anonymous.

Reduced Demographic Bias

While any machine learning model can exhibit bias, facial recognition has repeatedly shown disparate error rates across demographics. These disparities arise because there can be a lack of racial and gender diversity in the training data and facial recognition relies on physical features that correlate with protected characteristics. While facial recognition often is used outside of law enforcement contexts, from unlocking your phone to confirming you’re you when boarding a plane, in security contexts, there is fear that this can lead to profiling and trauma.

Object detection for weapons shows minimal demographic variance. Detection depends on geometric features like the shape of a trigger guard, the length of a barrel and the curve of a blade. These traits have nothing to do with the carrier’s identity.

Regulatory and Social Context

The regulatory landscape shows that people are becoming more wary of AI in security, particularly of facial recognition technology. Between 2019 and 2026, several U.S. cities limited or banned the use of facial recognition, starting with San Francisco (2019), Boston (2020), and Portland (2020).

These restrictions are specifically on biometric identification. Other non-biometric video analytics, like object detection for weapons, trespassing, or traffic monitoring, are still used around the country.

Ethical Use

Responsible weapon-detection implementations follow these principles:

- Data minimization: Only process necessary video feeds

- Limited retention: Store footage for a minimum number of days and encrypt it

- No identity profiling: Don’t link detections to individual identities

- Transparency: Clear signage to inform people of monitoring

- Human oversight: Require human review before serious interventions like law enforcement dispatch

Object detection allows for proactive threat identification without infringing on civil liberties, which facial recognition systems often conflict with. This approach has led to deployments at organizations nationwide, prioritizing weapons detection over identity-based systems.

The distinction matters for communities that have experienced discrimination through surveillance. Object-focused detection lets schools and public spaces enhance safety without contributing to the tracking and profiling that’s generated justified backlash against facial recognition.

The Future of Object Detection

Object detection has moved from an academic topic to a technology that saves lives every day. It takes raw visual data that is already coming in through cameras and turns it into actionable intelligence that security teams in a wide range of industries can use.

For security applications, AI gun and weapons detection systems offer something new: the ability to detect threats proactively while respecting privacy in ways other technologies don’t. As schools, hospitals and public venues look for proactive protection, object-focused AI is a way forward that balances safety with civil liberties, so communities can be safe without sacrificing the values they are trying to protect.

Over the next decade, as object detection continues to be developed and evolved, there will likely be standardized ethics guidelines and clearer regulatory distinctions between object detection and other computer vision processes, like biometric systems.

Omnilert’s AI gun detection technology was designed with these things in mind, providing data-centric detection capabilities that align with organizations’ privacy expectations and regulatory requirements. Learn more about our technology and how it can fit into your security strategy here.

Frequently Asked Questions

What is object detection and how is it different from image classification?

Object detection, a computer vision task, identifies objects within an image or video frame. It’s different from simple image classification because it can determine where and what multiple objects are, instead of just giving one label to the whole image

How does AI gun detection work in security systems?

AI gun detection analyzes video frames from cameras using object detection models trained to recognize weapons. When a weapon is detected with high confidence, the system alerts human operators for verification, so they can take proactive security measures.

What affects object detection accuracy?

Object detection, like any other technology, can be limited in poor conditions. Small or overlapping objects, bad lighting, occlusion (objects being partially hidden), and the presence of rare objects that have fewer training examples can all reduce detection accuracy.

How is object detection used in industries beyond security?

Object detection can be seen in self-driving cars for obstacle recognition, manufacturing for quality control, healthcare for medical imaging analysis, retail for inventory management, and environmental monitoring.

How is object detection deployed responsibly in security?

Responsible deployment includes data minimization, limited video retention with encryption, no identity profiling, transparency with clear signage, and human oversight before taking action based on detections.