In the U.S., tragedies of gun violence have become all too common. Mass shootings have reached record highs, and gun violence is now the leading cause of death among children and adolescents.

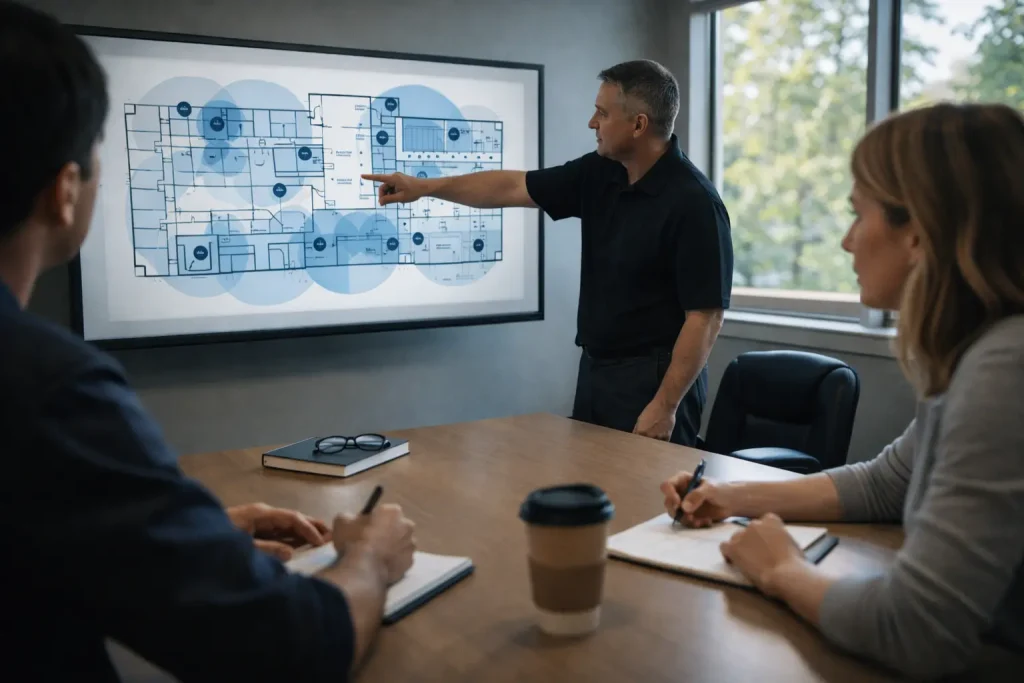

In response, innovators have sought new solutions to prevent harm. One of the most promising solutions is AI-powered gun detection, a technology designed to identify brandished firearms from security cameras in real time, allowing potential threats to be addressed before tragedy occurs. AI gun detection now plays an increasingly vital role in keeping schools, workplaces and public spaces safe.

However, as with any emerging technology, there are misconceptions about what AI gun detection can and cannot do. Some believe these systems operate autonomously and instantly summon police whenever a gun-shaped object appears on camera. This is not the case. The opposite is actually true: humans stay in the driver’s seat, with AI extending their visibility and effectiveness.

AI gun detection is not a decision maker; it is a decision-support tool. It assists trained professionals by identifying objects of concern that might pose a risk, enabling them to make informed decisions based on context, training and established safety protocols.

For executive leadership, this matters because it directly impacts operational risk, duty of care, and the overall cost structure of security. With AI gun detection, one person can monitor 10 or even 10,000 cameras simultaneously, exponentially expanding visibility without increasing headcount, delivering instant ROI on efficiency for businesses of all types

How Visual AI Gun Detection Works

Visual gun detection software uses AI-powered image and video analysis on existing video cameras. The software continuously scans video feeds for shapes, contours and video context consistent with brandishing a firearm. When something matches those patterns, it generates an alert and provides a snapshot for human review. The snapshot consists of both still images and video.

When assessing vendors, leaders should focus on two essentials: performance and integration. Performance demonstrates whether technology can be trusted in real-world conditions. Integration with automation determines whether the technology can reduce harm.

The Role Of AI Versus The Role Of Humans

AI is extraordinary for vigilance; it never blinks, never sleeps and can process massive amounts of visual information per second. But perceiving nuance and intent is a uniquely human strength. Humans, especially on-site security personnel, can interpret what’s actually happening in a scene: a school resource officer or police officer, a starter pistol at track practice, a toy gun, an ROTC drill, or a ceremonial firearm. They may have relationships with the people in the scene or prior knowledge of a scheduled activity, such as a play rehearsal or a “senior assassin“- type prank involving realistic weapons.

Every reputable AI gun detection platform uses a “human-in-the-loop” model. The AI identifies potential threats; a human reviews and verifies them. This process minimizes false alarms and ensures that responses are appropriate, proportional and aligned with the organization’s safety protocols.

AI is the first step, not the final one. The system flags a potential threat, but a trained person always decides what happens next.

Why Workflows And Training Matter

Even the best technology can fail without clear workflows and trained staff. Effective security requires defined roles, fast verification and a shared understanding of how alerts are handled.

At the facility level, that process typically includes:

1. Detection: The system identifies a potential firearm and notifies trained personnel.

2. Human Verification: A designated reviewer, such as a professional security operations center or a security team member, examines the image and related video clip to determine if the threat appears real. Video can provide context from the moments before and after the detection.

3. Decision Point: If confirmed as a credible threat, automated workflows can notify law enforcement and alert the community. If not, the alert is dismissed, logged and used to improve the system.

The time between alert and decision can be just seconds, but those seconds rely on training and confidence. Those who receive detections must know how to interpret the objects of concern, handle uncertainty and de-escalate safely. As with any threat detection, decisions should be made with caution based on the perceived level of risk.

False Positives, Workflow Design and Shared Responsibility

False positives are often discussed as a purely technical limitation of AI gun detection, but in practice, they are better understood as the result of system design choices and workflow ownership, not just detection accuracy. Importantly, visual gun detection systems are intentionally designed with a clear priority: not missing a real firearm.

Because the consequences of failing to detect a genuine threat are severe, these systems are built to surface anything that resembles a gun-like device so that a human can evaluate it. This includes objects that may appear weapon-like in a single frame or limited context. The goal is not for the AI to make a judgment call, but to ensure potential threats are seen by a human rather than overlooked.

AI gun detection is not designed to replace human judgment. It exists to augment human vigilance, particularly in environments where a small security staff may be responsible for monitoring hundreds or thousands of cameras simultaneously. AI helps reduce blind spots created by scale and cognitive load, enabling humans to focus their attention where it matters most.

Different vendors support different operational models, and these models directly influence how false positives are defined and managed.

Some vendors offer an end-to-end managed approach, where detection, human verification, and escalation, including law enforcement notification, are handled entirely by the vendor on behalf of the customer. In this model, a false positive typically occurs when a potential threat is incorrectly validated, and emergency response is initiated when it is not warranted.

Other vendors offer flexible or customer-owned workflows, integrating gun detection alerts into an organization’s existing security operations center (SOC), internal response teams, or third-party monitoring partners. In these configurations, the AI system flags an object of concern, but verification and response decisions are made by humans outside of the gun detection vendor itself. In these cases, a false positive may result from human interpretation, local context, or procedural execution rather than the detection technology alone.

Across both models, outcomes are shaped by how technology, people, and process intersect. False positives are influenced by:

- How detections are presented and contextualized, including access to video before and after the alert

- Who reviews alerts and under what conditions, whether centralized or distributed

- The training and experience of reviewers, particularly in interpreting ambiguity

- Defined escalation thresholds, including when and how law enforcement is notified

- Workflow ownership and governance, including logging, auditing, and post-incident review

For organizations evaluating AI gun detection, the focus should extend beyond detection accuracy to include workflow design, staffing, training, and governance. The objective is not to eliminate false positives — an unrealistic goal for any safety system designed to prioritize awareness — but to ensure alerts are handled consistently, proportionally, and with appropriate human oversight.

When AI detection is paired with clear workflows and trained decision-makers, false positives become a manageable and expected outcome of a system designed to maximize visibility, reduce missed threats, and help humans respond more effectively to potential risk.

Recommendations For Evaluating Or Implementing Visual Gun Detection

The mission of AI-driven safety technology is to save lives by augmenting human capabilities. Leaders considering implementing this technology should:

- Conduct a pilot in high-value areas. Deploy the technology on a limited scale in real-world operating conditions to validate the performance and evaluate how it integrates with your existing security ecosystem.

- Audit your existing camera infrastructure. Assess camera placement, resolution, lighting and field-of-view. Most detection failures result from poor video inputs, not the AI itself.

- Build a clear response protocol tied to human verification. Define who reviews detections, how quickly decisions are made and the automated steps that occur following confirmation.

- Develop an internal and external communication plan. Prepare messaging for staff, partners and the community to explain what the system does (and doesn’t do) to reinforce trust, transparency and shared responsibility.

AI can be an extraordinary ally in creating safer workplaces, schools and communities. Yet it is the human side of the partnership—the vigilance, compassion and judgment of trained professionals—that ultimately keeps people safe.

Frequently Asked Questions (FAQs)

Does AI gun detection automatically call the police?

No. Omnilert’s AI gun detection system does not autonomously contact law enforcement. They generate alerts that are reviewed by trained humans, who determine whether automating additional escalation is necessary based on context, situational intelligence, policy, risk and more.

Is AI gun detection making decisions on its own?

No. Omnilert’s AI gun detection is a decision-support tool, not a decision maker. It identifies objects of concern and surfaces them for human review. Humans always decide what actions, if any, should follow.

How Omnilert’s AI gun detection scale human capacity?

AI allows a small number of trained professionals to monitor hundreds or even thousands of cameras simultaneously. By continuously scanning video feeds, Omnilert’s technology directs human attention to potential risks that would otherwise be missed due to scale and cognitive limits.

Does AI gun detection replace security staff?

No. It enables security staff to be more effective by reducing blind spots and focusing attention where it matters most. It is designed to support people, not replace them.